The pipeline

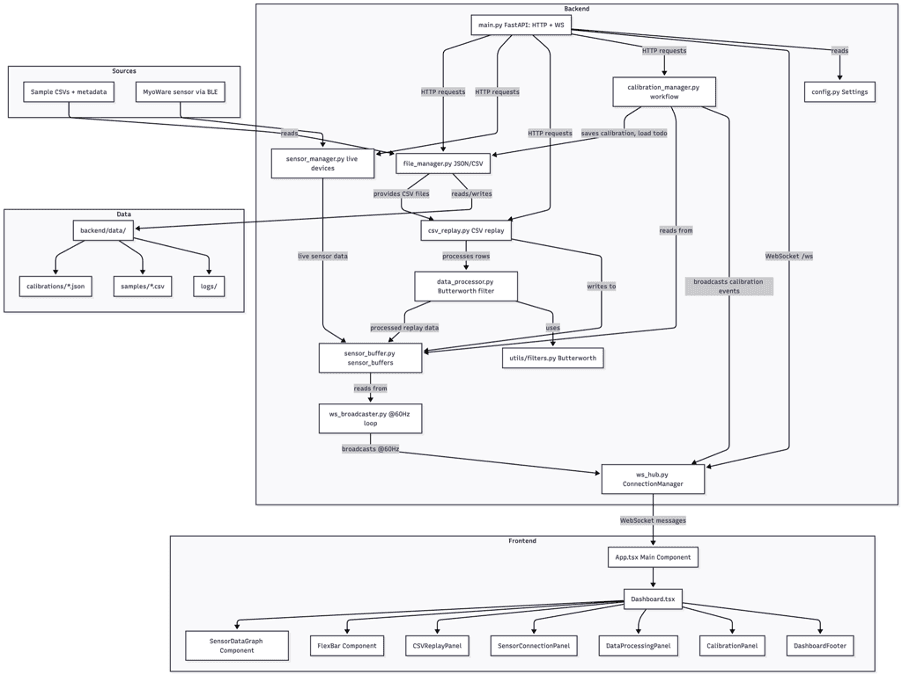

The architecture is split cleanly: sensor input and CSV replay -> processing and buffer registry -> FastAPI backend -> WebSocket broadcaster at 60 Hz -> React/TypeScript frontend with live Plotly graphs. All of those are independent modules that built up the codebase as a whole and that mattered a lot when things changed mid-project.

The calibration workflow captures three sets of three guided stages, three relaxed, three medium and three maximum contraction and then generates hysteresis bands at ±10% of max contraction. Those bands get saved as a JSON profile the frontend can reload.

End-to-end latency from sensor sample to frontend render is 13–16 ms typically. The brief asked for under 50 ms, which we beat comfortably.

Hardware

Two sensors: Delsys (professional, lab-based, excellent signal quality) and MyoWare 2.0 (cheap, Bluetooth Low Energy, runs off an ESP32 strapped to your arm). The plan was Delsys for everything. The reality was that the Delsys equipment needed lab access and its live-streaming required a software license we couldn't run independently, so MyoWare ended up doing most of the work during development.

MyoWare had problems. BLE connections from a desktop Python client kept dropping due to the firmware setup on the ESP32, the connection would last about 30s and then go dark. However it worked perfectly fine streaming to our phones. So clearly there was an issue with the BLE client on the desktop. The fix involved throttling notification flooding on the ESP32 side with a loop delay, so that desktop BLE clients could handle the amount of data we were sending (even so, we were still sitting at a comfortable 100Hz). After that it was stable.

The other MyoWare problem: it can't reliably distinguish a medium contraction from a maximum one. The original plan was a three-stage calibration model (relaxed, medium, max). With MyoWare you get two clean stages. We adjusted the workflow (for the MyoWare sensor type only) rather than pretending the data was better than it was.

My role

I was the team lead for the full year. I ran 15 internal team meetings and 10 supervisor meetings, wrote and scoped the majority of the 77 Linear issues and reviewed and merged every MR personally and worked on the CI alongside my teammate, Jack Moocarne.

On the CI: every push ran lint (black, eslint, ruff), type checks (mypy, TypeScript), security checks (bandit, audit), and pytest before anything could merge into dev. Getting that reliable and fast took a while, the first version ran for 12 minutes. I got it down to under 5 by splitting jobs by code path so they only ran when relevant files changed as well as other improvements on the pipeline runner backend deployed on my own VPS.

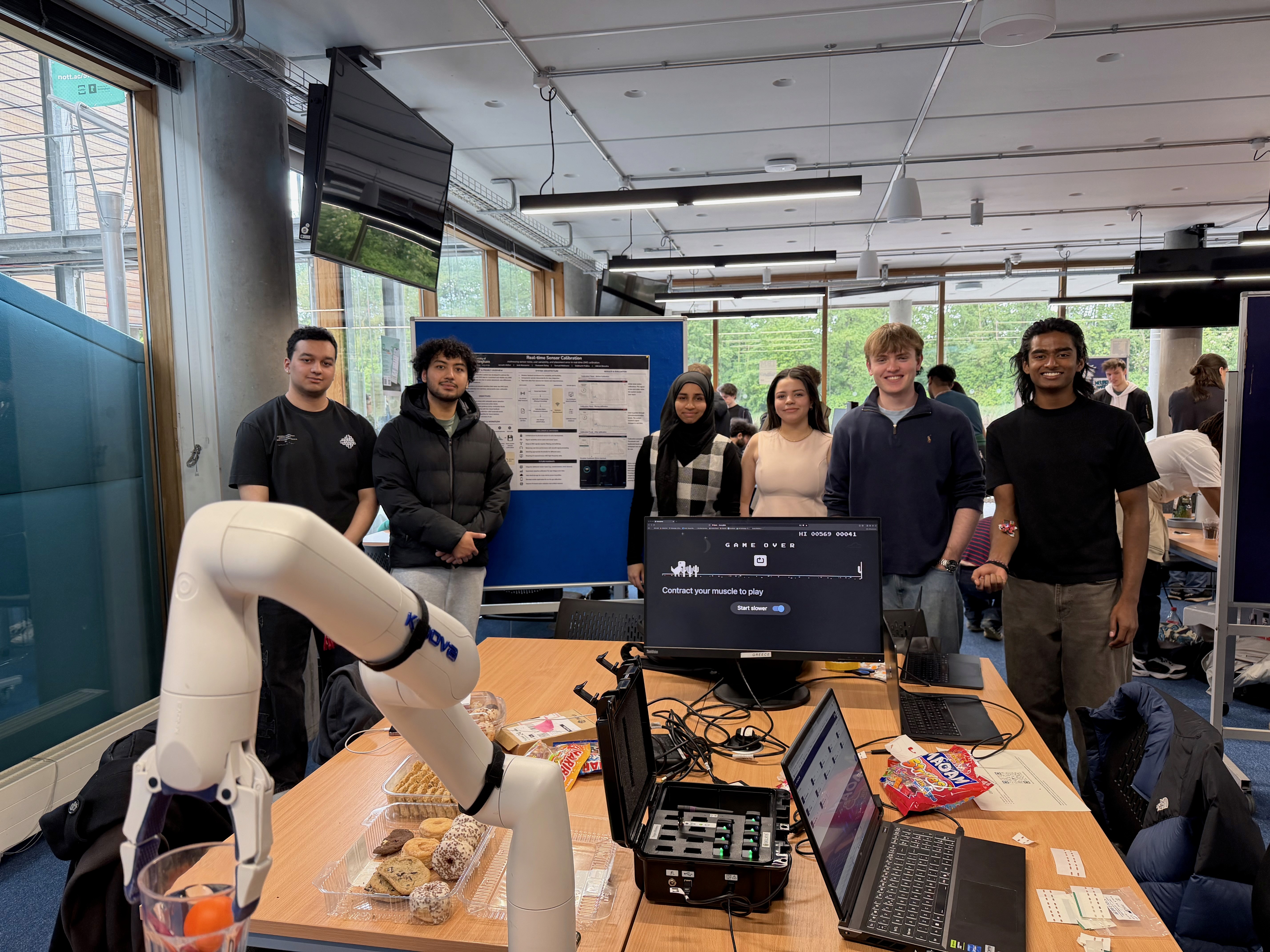

Technically I built the initial backend scaffold, CSV replay and the WebSocket hub. I connected the MyoWare over BLE including the firmware fix above. The most interesting work was in the final week: Lucas had written a Kinova robotic gripper controller, and I wired the calibrated hysteresis band states through the WebSocket output into it. Activate a muscle, the gripper closes. On top of that, a keyboard emulator converts active band states into OS-level key presses, which is what made the demo game integration work.

The management decision I'd call most impactful was introducing the ownership split in term two. Before that, people picked issues from the backlog based on preference, which was fine while the codebase was small. By the second term it meant unclear ownership and repeated context-building from scratch. Giving each person end-to-end responsibility for a component measurably improved pace and accountability.

What worked and what didn't

The modular architecture was the right call early. Adding MyoWare didn't require touching calibration logic. Adding Delsys live-streaming later slotted into the same sensor interface. When Lucas's Kinova script needed to consume calibrated state, the WebSocket output was already there. None of that would've been easy with a tightly coupled design.

CSV replay as a development mode was also the right call, hardware was regularly unavailable, and being able to run the full pipeline against recorded data kept progress moving. The trade-off is that you can't fully validate a biological signal based system without the actual hardware.

The thing I'd change: consistent access to a reliable Delsys baseline rather than replacing it with MyoWare. MyoWare is more portable but it's also less reliable, and the period where the hardware was unavailable for an extended stretch was the hardest stretch to keep momentum through. Portability matters less than reliability during development.

User evaluation showed 100% of testers agreed the system was responsive with low latency, and 75% found sensor information easy to locate. The calibration clarity scores had more variance, some users found the two-stage workflow limiting, which tracks with the three-stage limitation above.

Downstream use

The calibration output drove two things by demo day: a Kinova robotic gripper (relax = open, max contraction = close, medium = hold) and a keyboard emulator that converted band states into key presses, feeding into an interactive game. EMG without calibration is just a number that means something different for every person who looks at it. The gripper and the keyboard emulator only worked because there was a consistent, normalised signal underneath them; one that had already accounted for how that specific user's muscle behaves. That's the actual value of calibration: it's what converts a noisy biological input into something a downstream system can act on reliably.